I first noticed it in the organic leads.

They were dropping. Not dramatically. Not a cliff. More like a slow leak. The kind you rationalize for a few months before you accept something structural has changed.

I run marketing for one of Tijuana's largest medical tourism clinics. Multiple doctors, multiple specialties, substantial ad spend, years of SEO work. The clinic had hundreds of five-star Google reviews, doctors with 15 to 20 years of experience, and content that ranked well on traditional search. By every conventional metric, we were doing everything right.

But the leads were dropping.

The test that changed everything

One afternoon I opened ChatGPT and typed what our patients type:

"Best facelift surgeon in Tijuana."

Three names came up. None of them were ours.

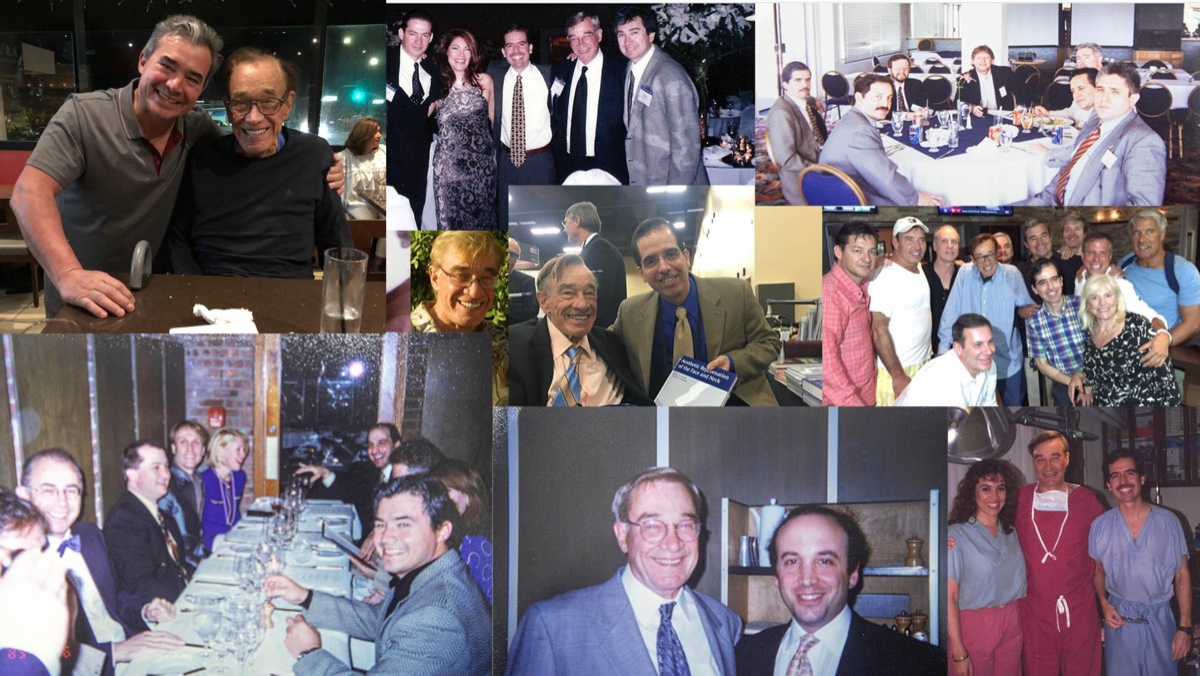

This was a clinic with two surgeons who are fellows of Dr. Bruce Connell, the man who literally created the deep plane facelift technique. Connell revolutionized facial rejuvenation surgery. Dr. Alejandro Quiroz and Dr. Juan Carlos Fuentes trained directly under him. They have photos together at conferences, dinners, and in the operating room. Their lineage in this procedure is as direct as it gets in plastic surgery.

Two decades of experience each. Thousands of successful procedures. Hundreds of five-star reviews mentioning them by name.

ChatGPT had never heard of either of them.

I tried Gemini. Same result. Perplexity. Same result. Claude. Same result. Four AI platforms, zero mentions of doctors who were, by any objective measure, among the most qualified in the region.

Meanwhile, doctors with five years of experience and a fraction of the credentials were showing up as top recommendations. Not because they were better. Because their digital presence happened to be structured in a way that AI could read.

That was the first time I understood: In our testing, AI consistently surfaced the most digitally legible doctor, not necessarily the most experienced one. And visibility to an AI model is completely different from visibility to Google.

When Gemini entered Google Search, the clock started

I could have ignored the ChatGPT results. At that point, most patients were still using Google. ChatGPT was a curiosity, not a primary channel.

Then Google integrated Gemini directly into search results.

AI Overview started appearing at the top of search pages. The same AI that couldn't find our doctors was now sitting above every Google result. The patient didn't even have to go to ChatGPT. The AI answer was right there, in Google, before the first organic link.

That was the moment I knew this wasn't a trend. It was a structural shift. And if I didn't figure out how to make AI recommend our doctors, we were going to watch our patient pipeline erode in real time.

What I found when I dug in

I spent months studying how AI models decide what to recommend. Reading research papers. Testing prompts. Analyzing what content the models were pulling from. Comparing clinics that appeared in AI answers with clinics that didn't.

The patterns became clear fast.

From what we observed, AI models process web content differently than traditional search. They appear to synthesize information from across the web, weighing how authoritative and structured that information is, and generating a single answer rather than a list of links.

If your information is scattered, inconsistent, or unstructured, AI can't synthesize it. You don't exist.

Here's what I found was missing for our doctors. These are based on our direct observations comparing clinics that appeared in AI answers with those that didn't:

No personal websites. Each doctor had a page on the clinic's website, but no standalone web presence. For a human patient, that's fine. For an AI model, it means the doctor doesn't exist as an independent entity. Without a standalone web presence, the AI had very little to work with when building a profile of who Dr. Quiroz actually is.

No structured data. The clinic website had zero schema markup. No Physician schema, no MedicalOrganization, no MedicalProcedure, no FAQPage. The content was there, but it was trapped in HTML that only humans could parse. Structured data appears to materially help AI models understand what a page is about and who it's about.

Generic reviews. The clinic had hundreds of Google reviews, but most of them said things like "Great doctor, would recommend" or "Good experience at VIDA." Which doctor? What procedure? What was the outcome? A human reads that and thinks "positive review." An AI reads that and gets zero extractable data. No doctor name, no procedure name, no specifics. Useless for citation.

Disconnected academic work. Several of our doctors have published research papers, hold fellowships, and have academic credentials that should establish them as authorities. But none of that was connected to their digital identity. Their papers listed institutional affiliations from years ago. Their Google Scholar profiles didn't link to their current practice. The AI had no way to connect "Dr. Rodriguez the researcher" with "Dr. Rodriguez the bariatric surgeon in Tijuana."

No personal Google Business profiles. The clinic had a Google Business Profile. Individual doctors did not. In our testing, separate professional profiles appeared to function as stronger entity signals than shared clinic pages. A clinic profile tells AI about a business. A doctor profile tells AI about a professional. Without individual profiles, each doctor was invisible as a person.

What we actually did to fix it

This wasn't a quick fix. There was no plugin, no tool, no shortcut. It was months of systematic work, rebuilding the digital presence of each doctor from the ground up.

Personal websites built for AI, not just patients. We created individual websites for each doctor. Not brochure sites. Structured content sites with schema markup on every page, FAQ hubs answering real patient questions, procedure pages with pricing, credentials, and outcomes data. The goal wasn't to drive traffic to these sites. The goal was to create a clean, authoritative source that AI models could extract from.

Dr. Gabriela Rodriguez's site (gabrielarodriguezmd.com) is a good example. It includes her PhD, her FACS fellowship, her dual board certification, her 7,800+ procedures, a weight loss calculator, before-and-after results, FAQs, and full schema markup. Every fact about her is machine-readable.

Reddit, forums, and third-party presence. AI models don't just read your website. They cross-reference information across the web. We started posting helpful, genuine content on Reddit (subreddits like r/PlasticSurgery, r/BariatricSurgery), answering patient questions, and mentioning our doctors in context where it was relevant and useful. Not spam. Not promotional. Real answers to real questions that happened to reference real doctors.

Review templates that generate useful data. We redesigned our entire post-visit review process. Instead of "Please leave us a review," we guided patients to include specific details: the doctor's full name, the procedure, the facility, and their experience. A review that says "Dr. Quiroz performed my deep plane facelift at VIDA Wellness. Natural results, minimal bruising, I flew in from San Diego and the process was seamless" gives AI 10x more extractable data than "Great experience, 5 stars."

Academic papers linked to current practice. We connected each doctor's published research to their current digital presence. Google Scholar profiles updated. Institutional affiliations corrected. Links between publications and practice websites established. This builds what AI researchers call "entity authority." The model starts to understand that this specific person is a recognized expert associated with specific procedures.

Individual Google Business Profiles. Each doctor got their own Google Business Profile, separate from the clinic. With their photo, credentials, specialties, and direct contact information. AI models treat individual professional profiles as strong entity signals.

Content structured for extraction, not just reading. This is the core of what we now offer as GEO optimization for medical clinics. Every blog post, every FAQ, every procedure page was structured with MIP format (Most Important Point first), table comparisons, stat callouts with sources, and schema markup. The content reads well for humans. But it's also designed so that when an AI model scans it, the key facts are immediately extractable.

What happened

It wasn't instant. AI models don't update in real time. But within 60 days of implementing these changes for our first doctors, we started seeing results.

ChatGPT began citing Dr. Gabriela Rodriguez as a top bariatric surgeon in Tijuana. With her credentials, her procedure count, her pricing, and a direct link to her website.

Gemini started recommending Dr. Quiroz and Dr. Fuentes for facelifts, with specific details about their techniques and their clinic. Between them, these two surgeons perform over 40 deep plane facelifts per month. That's a volume most US practices don't see in a quarter. And until we rebuilt their digital presence, AI had no idea they existed.

Google AI Overview began featuring our doctors in the AI-generated answers that sit above traditional search results. The same space we were invisible in three months earlier.

Dr. Quiroz told me patients from the US were telling him they found him on ChatGPT. He didn't even know what ChatGPT was.

AI responses captured March 2026. Results vary as models update their knowledge.

Why this matters for your clinic

If you're reading this, your clinic probably has the same problem we had. You have the experience, the reviews, the credentials, and the outcomes. But AI doesn't know any of it. Not because your clinic isn't good enough. Because your digital presence wasn't built for the way AI reads information.

The patients who are finding your competitors on ChatGPT right now aren't choosing them because they're better surgeons. They're choosing them because AI can see them and it can't see you.

AI traffic to healthcare sites grew 527% year-over-year in 2025 (BrightEdge Research). Gartner predicts traditional search volume will drop 25% by end of 2026.

This isn't a trend you can wait out. Every month you're invisible to AI is a month your competitors are being recommended in your place.

The six things AI needs to see

Based on what we've observed working inside one of Tijuana's largest medical tourism clinics, these are the signals that appear to most consistently influence AI visibility. This is not a definitive model of how LLMs work. It's what we've seen produce results:

- Content authority. Structured, factual content about your doctors and procedures, published on websites you control.

- Schema markup. Machine-readable data (Physician, MedicalOrganization, MedicalProcedure, FAQPage) on every relevant page.

- Review volume and quality. Google reviews that mention the doctor by name, the procedure, and specific outcomes.

- Entity recognition. AI needs to recognize your doctor as a distinct professional entity associated with specific procedures and locations.

- Web presence consistency. Complete, accurate profiles across medical directories (RealSelf, Healthgrades, Doctoralia, Google Business).

- Content freshness. Regular publishing of relevant, updated content signals ongoing authority.

These aren't theoretical. These are the patterns we observed, tested, and validated by getting real doctors cited by real AI platforms.

What you can do right now

Before you hire anyone, before you spend any money, do this:

Open ChatGPT. Type "Best [your specialty] in Tijuana." See if your name comes up.

Open Gemini. Try the same query.

Open Google and look at the AI Overview at the top of the results.

If you're not there, now you know why. And now you know what it takes to fix it.